Good info all round, there are clearly some clever people at work here

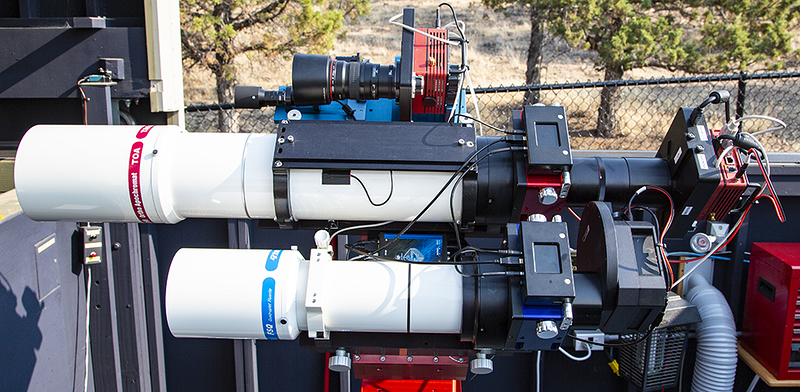

I’ve also been using a dual scope setup for a while now:

- Avalon Linear Mount

- Takahashi FSQ 85 with reducer / Moravian 8300

- WO 71 / Moravian 8300 / Avalon XGuider for FOV alignment

- Takahashi FS60 / Lodestar2 for guiding

The fields of view of both image trains are very similar and the main aim is to capture L with one and RGB with the other (or for narrow band a 2/1 split that suits the target - can’t afford a duplicate Astrodon  ).

).

I’ve also been successful in running a master/slave setup with two instances of SGP which runs fully automated with the exception of connecting the cameras (Moravian have a great driver which allows connection of the camera by serial number but unfortunately the entered numbers in the respective instances get muddled up so manual connection is needed). The slave instance is dumb, it only acquires images and runs the focus routine, everything else is done by the master instance. Because of the separate guide scope the slave only really looses images during the meridian flip - as long as I don’t dither

So onto dithering! Unfortunately I don’t have an unguided mount so the first solution is out (in fact the Avalon must be guided due to the way it’s constructed). Because of the CCDs I won’t be able to do short exposures and because exposure times for me are generally similar between the two scopes any overlap with a dither will loose a fair amount of integration time. So really I would need some synchronisation to happen.

Luckily I was thinking a lot on this yesterday (after commenting in the other thread) and nothing beats a good challenge  ! So I’ve had a look at all the options to interface with SGP. Unfortunately at the moment there is not enough there to implement a ‘clever solution’ as there is just not enough information and access available. Having said I did come across another idea which might work well with my particular setup (but maybe not for other configurations). It’s also not ideal as it still wasted time but better than nothing I guess.

! So I’ve had a look at all the options to interface with SGP. Unfortunately at the moment there is not enough there to implement a ‘clever solution’ as there is just not enough information and access available. Having said I did come across another idea which might work well with my particular setup (but maybe not for other configurations). It’s also not ideal as it still wasted time but better than nothing I guess.

The basic idea is that (via the SGP API) I am monitoring the camera status of the master system. Here I am looking for the camera status to change from anything to ‘INTEGRATING’. Once this happens I know that an exposure has started and (assuming I know the exposure time that is used beforehand) I know how much time I have available to expose on the slave system before ‘other stuff’ such as dithering or focusing might occur. Now, if I select a slightly shorter exposure time on the slave system (say 5s less, assuming that download times are equal) I can guarantee that the slave system will have finished its exposure before the master system. I could of course also do three exposures if the slave system has a much shorter exposure time and will be finished by the time the master has finished its exposure. After finishing an exposure the slave system will wait again until the master camera status switches to ‘INTEGRATING’ on the next image and the game begins again. Practically there are obvious drawbacks, for example when the master system focusses the slave has to wait. Also when the slave system focusses it might miss the master system starting an exposure and it will have to wait until the next exposure. But that doesn’t worry me too much as the focus runs don’t happen that often.

As I’m lying in the bed with flu I’ve fired up my computer and created a rough and ready .NET app which does exactly that. When started it will poll the API until the master camera starts integrating then closes. In combination with SGP this can be used with scripts (which also allows the call to an EXE file), i.e. when the script function in the slave instance calls this ‘mini app’ it will start and run until the master camera starts integrating, then closes. This causes the slave instance to pause until that time, then proceed with integrating.

There is a catch though. Unfortunately SGP only allows us to run a script before/after each event and not before/after each image. So this really spoils the fun a bit but fortunately (at least for me) there is a workaround. This is possible because my slave system usually only has one filter (L or one of the narroband filter. So instead of creating just one event and take all images there I create two events with the exact same details. If I then set SGP to ‘rotate through events’ the slave system will alternate between those two identical events but crucially allow me to run the script every time an image is taken.

I know this is clunky at best but I think all that is possible just now as there is no way to get more detailed information or hook into SGP. I’ve not used this on the real setup yet but just run a simulation and it worked well. There is still a lot more testing to do but at least it could be something that might work for my scenario.

Mike

)

)